When it comes to customer service, one principle often overlooked is the power of involving the right stakeholders at the right time. Let me explain how we handled this while planning my cousin’s wedding.

The Challenge: Quality on a Limited Budget

We had a clear goal – to organize a beautiful wedding within a limited budget, without compromising on quality. Food was the biggest priority, and I reached out to multiple event management teams. Most either quoted very high prices or couldn’t guarantee the quality we wanted.

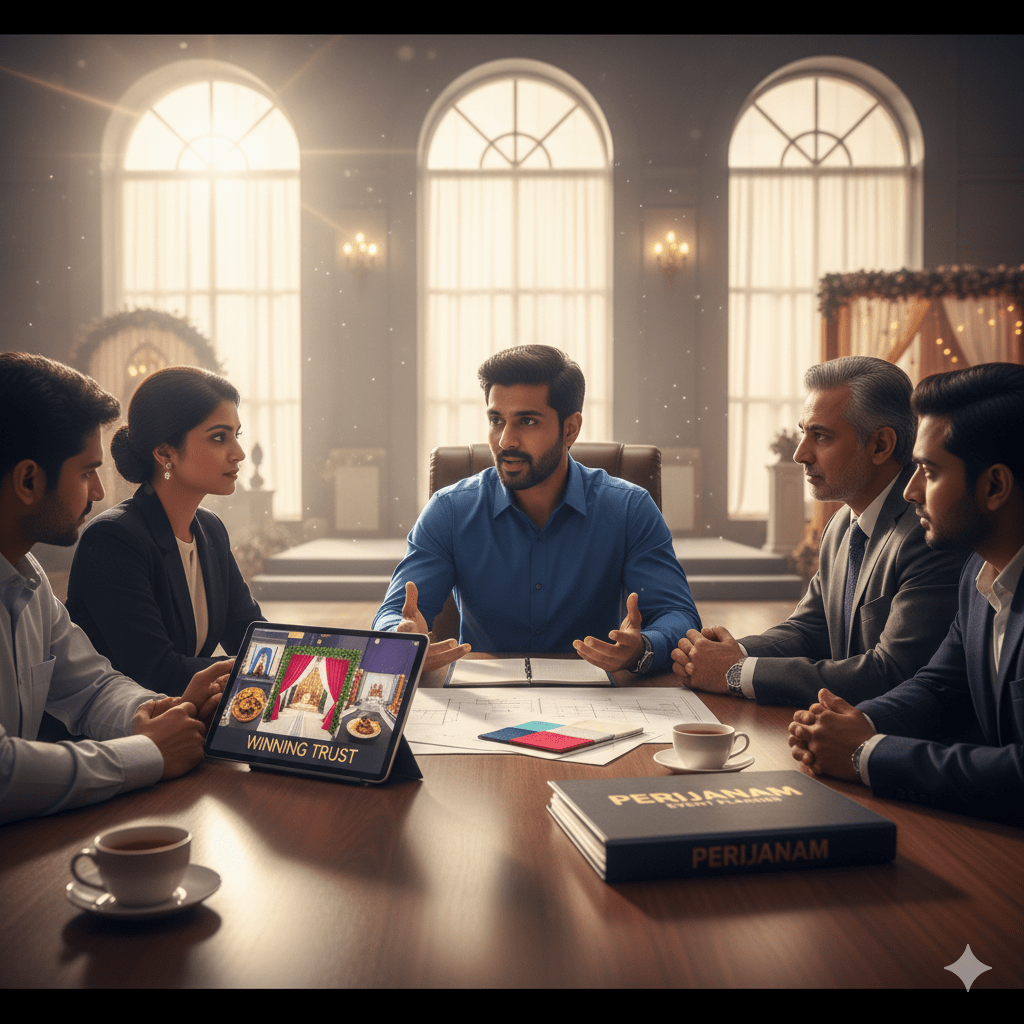

One day, I received a call from a person named Fazil from a Pee Vee Events based in Perijanam, about 50 km away from our venue. Initially, I was hesitant. My main concern was freshness of food – after all, no one wants to take a chance on wedding day meals.

My initial reaction? A firm “no.” The distance of their kitchen was a major red flag for food quality and freshness, especially for a significant family event. Moreover, we had no idea about service quality. However, the salesman, Fazil, was persistent and, more importantly, perceptive. He understood my reluctance and immediately offered a guarantee: impeccable food quality, freshness, and even the promise of setting up a kitchen closer to our venue.

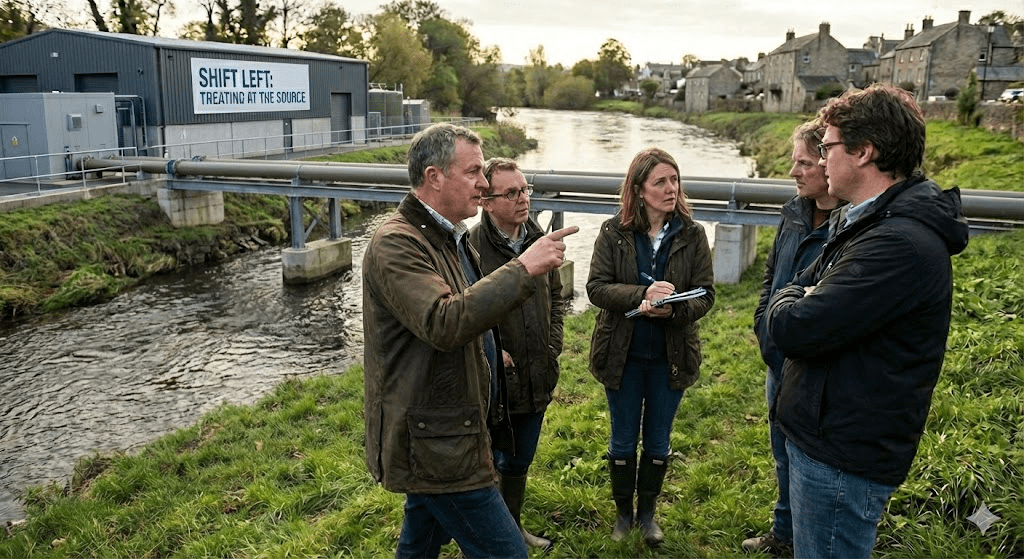

This was a good start, but what truly solidified our confidence was his next move. Fazil didn’t just ask for another phone call; he requested an in-person meeting. And he didn’t come alone.

Continue reading “How the Right Stakeholders Earn Customer Confidence – A Real Wedding Story”