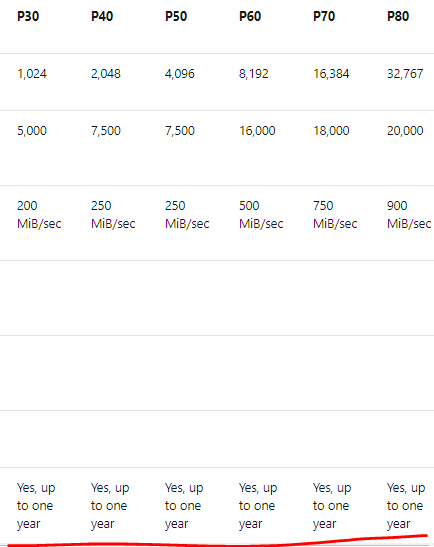

Azure Reservations help you save money by pre-paying for one-year or three-years or monthly but commitment for 1 or 3 years of virtual machines, SQL Database compute capacity, Azure Cosmos DB throughput, or other Azure resources. Pre-paying allows you to get a discount on the resources you use. Reservations can significantly reduce your virtual machine, SQL database compute, Azure Cosmos DB, or other resource costs up to 72% on pay-as-you-go prices.

I would like to talk about how best we can utilize reserved instances (RI) and other techniques (runbooks) to bring more cost savings. We will also talk about how we can decide whether we should go with RI or on Demand Virtual Machines (VMs).

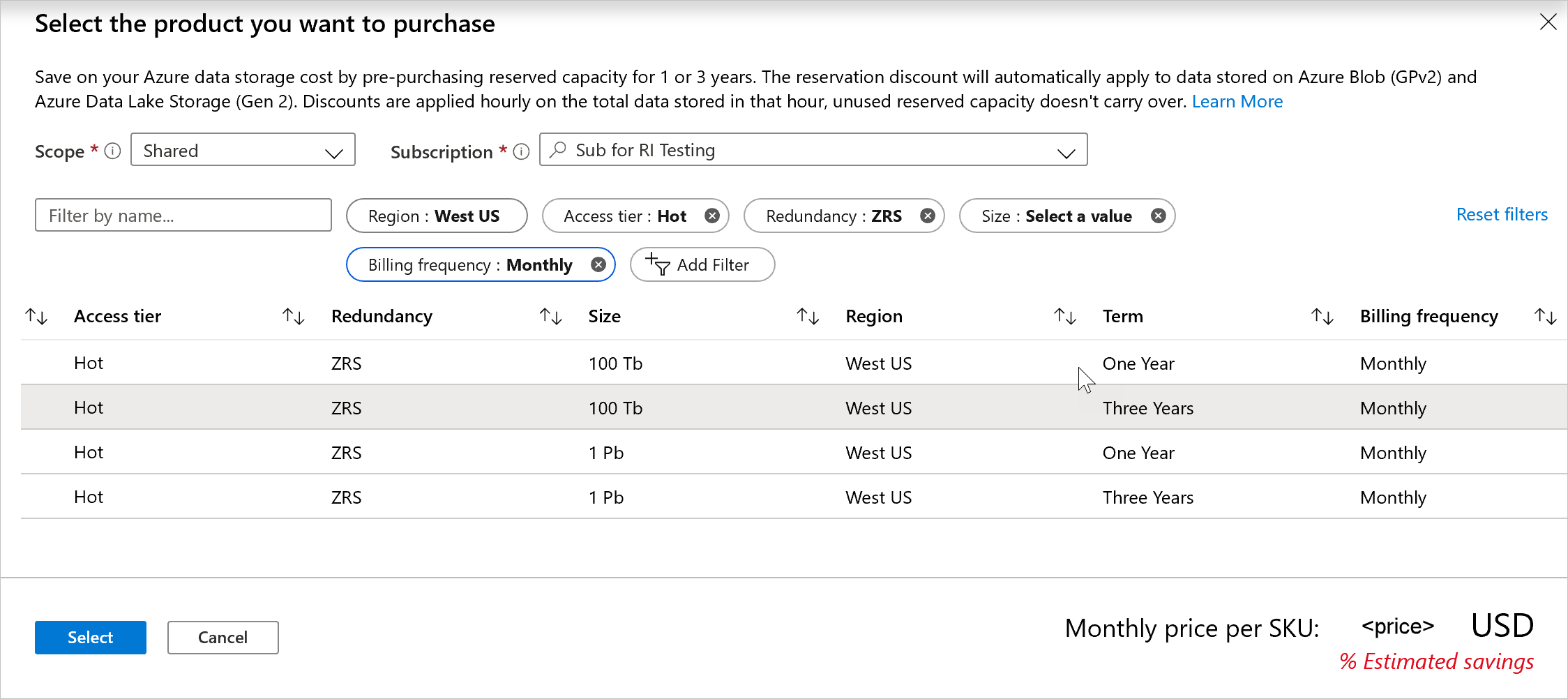

Let’s look at the some of the terminologies and how is it being used in the buy the RI from Microsoft.

Purchasing options

- 1 Year commitment – Paid upfront or monthly

- 3 Years commitment – Paid upfront or monthly

Microsoft has recently announced monthly payment of RI price which is really a welcome move from Microsoft. You can buy new reservations with monthly payment frequency and you can convert the existing RIs when you renew it to get the bills monthly.

You get the recommendation from the Azure Advisor which is available in the Azure portal for all the subscriptions. It is based on your usage. However, it is good if we could plan to select the right VM SKUs. Will talk about it.

One thing that you must remember that reservation discount is ‘USE IT OR LOSE IT’. You can’t carry forward unused reserved hours.

Generally, you do not get any benefits from RI if the VMs are not utilized above 60-70%. But I will talk about this how we can bring additional benefits on such scenarios.

I will be talking only about VM RI in this blog.

To plan, you need to know few things.

Continue reading “All About Azure Reservation – VMs”