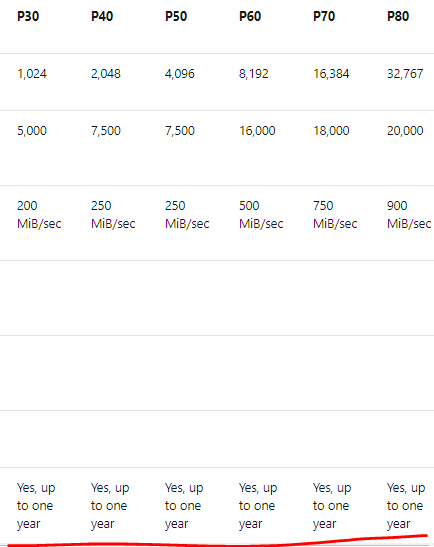

Last week, Microsoft announced the Preview of Capacity Reservation for VMs. You can reserve VM capacity in your DR region to ensure that you have VM resources available to create or turn on your protected VMs using ASR. ASR does not guarantee that your VMs can be turned on in your DR region in the event of disaster recovery. So, capacity reservation is a welcome feature and much needed. However, this is increasing the cost of your solution again.

- Cost factors

- VM cost

- ASR protected VM cost

- Capacity Reservation Cost ( as same as your actual VM cost)

- other costs

Note: DR is not just the VMs but including other components. I did not provide the details above because it applies to both the options.

Hmm.. Can we plan a DR cost-effectively in Azure? Let’s take a look:

Continue reading “Disaster Recovery – Do you really need one?”